Founders, investors, and advisors are increasingly turning to AI assistants to read, interrogate, and explain valuation reports. Here’s what changes, what gets better, and where the risks sit — and why we just shipped a Markdown export of every Equidam valuation report, built specifically for AI legibility.

A new layer on top of the valuation report

This is a fundamentally new layer on top of the valuation report — and it’s reshaping three things at once.

1. Information gathering gets faster

A typical Equidam valuation report runs ~30 pages and covers five valuation methods, a full set of financial projections, qualitative scores across six dimensions, industry benchmarks, and a methodology appendix. That’s dense by design — startup valuation is genuinely multi-dimensional. But it also means a founder hunting for a specific number (“what discount rate did the DCF with EBITDA method apply in year 4?”) used to mean ten minutes of scrolling.

With a properly structured report dropped into an AI assistant, that question is answered in seconds, with the relevant context (the WACC build-up, the survival probability adjustment, the assumed terminal growth) pulled in alongside it. The AI doesn’t replace the report — it makes the report navigable in natural language.

2. Information output crosses skill boundaries

The bigger shift — and the one we think is most undervalued — is translation across knowledge backgrounds.

Most early-stage startups have founding teams with mixed financial literacy. The CEO might be a deep-tech engineer; the second co-founder might be a designer; the lead angel might be a domain operator with no formal finance training. Historically, a valuation report met all three at the same level — usually a finance-native one — which meant two of the three either skimmed it or relied on the third to interpret.

With AI assistants, that asymmetry collapses. The same Equidam report can be queried at three different altitudes:

– A finance-native investor can ask about the implied EV/EBITDA at exit and the sensitivity to terminal growth.

– A technical founder can ask “explain what WACC means and why it changes the valuation.”

– A designer-cofounder can ask “summarise the strengths and weaknesses of this valuation in plain English.”

The same source document, three different interpretive layers, one click each. This is a material increase in how much of the team can actually engage with the company’s financial position — and how prepared the whole founding team is going into an investor conversation.

3. The perils: when stakes are high, every number matters

Here’s where it gets serious.

A valuation isn’t a marketing artefact. It anchors fundraising, ESOP allocations, tax filings (409A in the US, DCF-based fair-value methods in Europe), shareholder agreements, and exit negotiations. Each number is potentially load-bearing. A misread WACC, a misattributed terminal value, a confused growth rate — these compound into real money and real disputes.

And AI assistants, today, make two classes of mistake that are easy to overlook:

Parsing errors on the input side. PDFs are a hostile format for LLMs. Tables get linearised. Numbers detach from their column headers. Year labels and row labels collide. Excel files are slightly better but lose the explanatory text that surrounds the numbers. The result: the AI confidently answers a question based on data it has half-misread, and you have no easy way to spot it.

Hallucination on the output side. When an AI assistant can’t find a number, the well-behaved ones say so. The less well-behaved ones invent one. Either is corrosive when the stakes are a $5M round or a regulator-facing filing.

This is the bit that doesn’t change with AI: when the stakes are high, every number needs to be double-checked, and every formula needs to be sound. The methodology has to be defensible. The benchmarks have to be sourced. The assumptions have to be traceable to a real input.

Why the underlying valuation has to be right in the first place

This is where we want to be explicit about what Equidam brings to this shift.

We’ve valued over 160,000 companies across more than a decade. Every one of those valuations runs through the same methodology engine: five recognised valuation methods (Scorecard, Checklist, VC Method, DCF with LTG, DCF with EBITDA Multiple), weighted by company stage, blended with a direct multiples view, and cross-checked against Aswath Damodaran’s industry datasets which we update annually.

What that volume forces is rigor:

– Every formula in the report is mathematically defined and unit-tested — not pattern-matched from training data, not approximated.

– Every benchmark (industry betas, country risk premiums, growth rates, exit multiples) is sourced from a defined dataset and cited in the methodology appendix.

– Every assumption a user makes is traceable to a specific input field, with the input field bounded to a defensible range.

– Every methodology choice (when to weight Scorecard heavily vs. DCF, how to handle pre-revenue stages, how to discount for survival probability) is documented and consistent across all 160,000+ reports.

You can run an Equidam valuation through any AI assistant and not have to worry about whether the underlying numbers are correct, or whether the methodology was applied properly. They are, and it was. What the AI adds is interpretation — but only on a foundation that doesn’t move under it.

What we shipped: the Markdown export

This is the context for a feature we just shipped: a Markdown (.md) export of every Equidam valuation report, built specifically for AI assistants.

PDFs and Excel files were never designed for LLMs. The Markdown export is. Every number carries an explicit descriptive label next to it. Every valuation method comes with a plain-English description of how it works. The full methodology appendix — industry benchmarks, WACC build-ups, mathematical formulas, glossary of 30+ terms — is included inline.

It’s ~885 lines, ~44KB, and renders cleanly in any text editor, GitHub, Notion, Obsidian, or AI assistant.

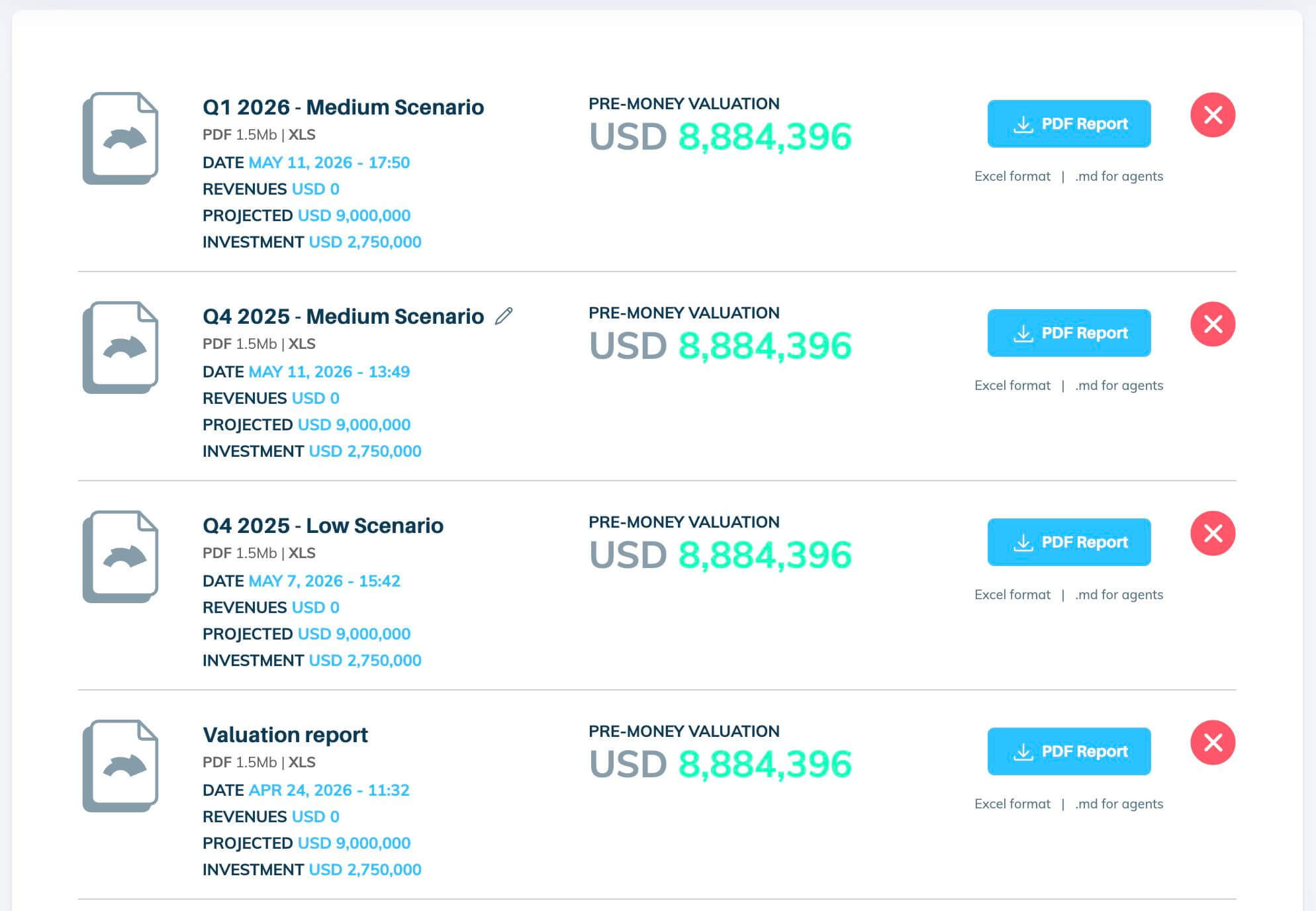

The workflow is: download the .md file from your Equidam Downloads page → upload to ChatGPT, Claude, Gemini, or your AI tool of choice → ask whatever you need. The file even ships with suggested prompts inside it, so you don’t have to invent the right question.

To our knowledge, Equidam is the first valuation platform to ship a purpose-built AI export format. It’s available now to all paying users, on the Downloads page of every report.

What this means going forward

AI assistants aren’t replacing valuation work. They’re changing who can engage with it, how fast, and at what depth. That’s a strict improvement — provided the underlying valuation is correct.

The risk of AI in finance isn’t that the tool is bad. It’s that an articulate tool reading a fuzzy input produces an articulate fuzzy answer. The countermeasure isn’t to avoid the tool. It’s to give it a source document built for it, grounded in methodology that has been pressure-tested across 160,000+ companies.

That’s the bet behind the Markdown export. And it’s why, at Equidam, we’ll keep investing in formats — and methodology — that hold up when an AI assistant is on the other end of the workflow.

Equidam values startup companies using transparent, methodology-grounded analysis trusted by 160,000+ founders, investors, and advisors. Try a valuation or explore our methodology.